Answer · · 5 min read

Business case template for a knowledge management tool

Most internal proposals get skimmed or ignored because they read like a product pitch. This template flips the format: it leads with the cost of the current problem, shows three options side by side, and frames the tool as the solution to a measurable loss, not a nice-to-have.

A business case for a knowledge management tool should open with the cost of the current problem, not the features of the proposed tool. Managers approve work that stops a bleed. This template gives you seven sections your manager will read: problem statement, cost of doing nothing, proposed solution, options considered, cost and benefit analysis, rollout plan, and success metrics. Copy it, fill in your team’s numbers, and put the tool name in the last third of the page.

The structure assumes a manager with 15 minutes and no patience for vendor language.

Section 1: Problem statement (3 to 5 sentences)

State the problem in your team’s words, not in knowledge management vocabulary. Name two or three specific symptoms the manager has already seen.

Example: “Our team makes decisions in Zoom and Slack, then relitigates them six weeks later because no one can find the original thread. Our last three onboarding cycles took more than eight weeks. Two senior people carry most of the context in their heads.”

End with a one-line thesis: “We do not have a structured record of what we have decided, who owns what, or why. Every person we hire or lose makes this worse.”

Section 2: Cost of doing nothing

This is the section that gets the proposal approved. Put a number on the problem. Use the ROI calculator for AI knowledge tools to compute team-level losses.

Show at least three costs:

- Search time. McKinsey has estimated knowledge workers spend around 1.8 hours a day gathering information. IDC has put the figure closer to 2.5 hours. Even at a conservative 30 minutes a day for a 20-person team, that is over 1,700 hours a year.

- Repeated decisions. Estimate how many times per quarter your team relitigates a prior decision. A 90-minute meeting with six people is roughly nine person-hours. Multiply.

- Onboarding delay. If a new hire reaches full productivity four weeks later than they could, that is roughly $5,000 to $15,000 per hire at typical knowledge-work salaries. The cost of lost team knowledge per employee page breaks this down by role.

Total the annual cost. Put the number in bold. Every later section is compared against this figure.

Section 3: Proposed solution

Describe the solution in one paragraph, in plain terms. Do not name the tool yet.

Example: “We need a system that captures decisions, tasks, and context from the meetings, calls, and documents our team already produces, organizes them into a searchable team memory, and keeps it current without anyone writing pages. It must connect to the tools we already use (Zoom, Google Meet, Slack, Linear or Jira) so no one has to change their workflow.”

This framing describes outcomes, not features. If the manager pushes back later, you can point to each outcome and ask which one is not worth solving.

Section 4: Options considered

Present three options side by side. Three signals you did the work without drowning the reader.

- Option A: Do nothing. Cost per year: the number from Section 2.

- Option B: Expand an existing tool (a better wiki, tighter meeting notes, a shared doc template). Cost: staff time to maintain it, plus the cost of doing nothing because wikis decay. Cite your team’s own history with internal wikis.

- Option C: Adopt a self-building knowledge tool such as Internode, which pulls decisions and tasks out of your team’s conversations into a structured knowledge base. Cost: the license fee, plus roughly 4 to 6 hours of setup, plus pilot review time. See what an AI knowledge base that builds itself is for the architectural difference.

Keep each row to three or four sentences.

Section 5: Cost and benefit analysis

Show the math. A small, boring table beats a polished narrative here.

| Item | Year 1 |

|---|---|

| License (example) | $X |

| Setup and internal training | $Y |

| Time saved (from Section 2) | $Z |

| Net benefit | $Z minus (X plus Y) |

If the net benefit is positive in year one, you have a case. If it is only positive starting in year two, say so directly and include the two-year picture.

Section 6: Rollout plan (4 to 6 weeks, one team)

Propose a pilot, not a rollout. Managers approve pilots more easily than deployments.

- Week 1: Connect Zoom, Google Meet, and Slack for one team. Define three questions the system should answer by week four.

- Weeks 2 to 3: The team uses the tool normally. The chat agent answers questions from real meetings. Tasks get created and decisions get updated only after a human approves the change.

- Week 4: Review. Can we find past decisions faster? Did we repeat any discussions? Did the drafter produce a usable project brief?

- Weeks 5 to 6: Decide to continue, expand, or stop.

Section 7: Success metrics

Pick three metrics you can measure before and after the pilot.

- Average time to find a past decision (before: usually 10 to 30 minutes; target: under 2 minutes).

- Number of meetings per month that revisit a prior decision.

- Time to first useful contribution from new hires.

State clearly what “success” looks like at week four. If none of these move, the pilot did not work, and you end it cleanly. That pre-commitment to a fail condition is what makes managers trust the proposal.

Where Internode fits in the template

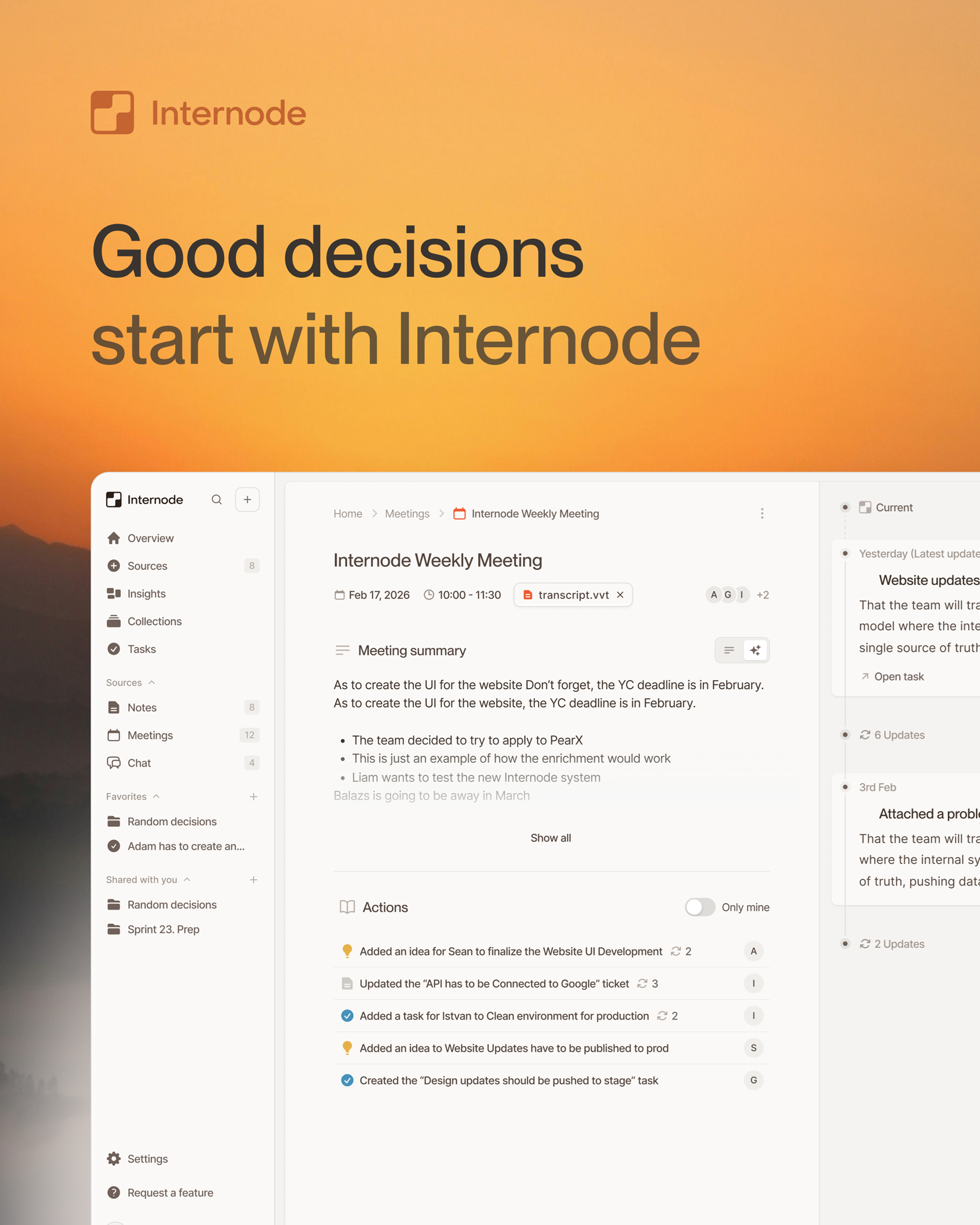

Internode is named in Section 4 as one option. It matches the outcomes in Section 3: it reads transcripts from Zoom, Google Meet, and Slack, builds a structured record of decisions, tasks, topics, and goals, and keeps Linear or Jira in sync through a two-way integration. The chat agent proposes changes that a human approves before anything is written. You never maintain pages.

For the numbers to plug in, start with the ROI calculator for AI knowledge tools. For the pitch framing, read how to propose new software to your manager.

Sources

- McKinsey Global Institute, “The social economy: Unlocking value and productivity through social technologies” (July 2012): mckinsey.com/industries/technology-media-and-telecommunications/our-insights/the-social-economy

- Susan Feldman, “The High Cost of Not Finding Information,” IDC White Paper (2001), reprinted in KMWorld: kmworld.com/Articles/Editorial/Features/The-high-cost-of-not-finding-information-9534.aspx

- Panopto, “Workplace Knowledge and Productivity Report” (2018), for the 5.3 hours-per-week finding used in Section 2: panopto.com/resource/valuing-workplace-knowledge/

Related pages

- How to calculate the ROI of an AI knowledge tool

Most ROI pitches for knowledge tools sound like vendor math. This one uses four concrete inputs your manager can push back on: hours lost to searching, cost of duplicated decisions, cost of slow onboarding, and cost of turnover wiping team knowledge. You get one defensible number to put on page one of your proposal.

- How to propose new software to your manager

Most software proposals die in the first 60 seconds because the employee leads with the tool, not the pain. This playbook flips the order. You build the problem first, pre-empt the three objections your manager always raises, and only name the tool after they are already leaning in.

- The cost of lost team knowledge, per employee, per year

Lost team knowledge is not a soft cost. Research from IDC, McKinsey, Panopto, and Gartner puts the per-employee annual loss somewhere between $10,000 and $20,000. This page shows how that figure is constructed, which sources to trust, and which assumptions you can adjust for your own team.

Next step

If this topic is relevant to your team, continue on the main site or explore the product directly.